|

CONCERT CALL FOR SONIFICATIONS Listening to the Mind Listening Creative Producer: Stephen Barrass The Listening to the Mind Listening Concert will be held at the Sydney Opera House as part of the International Conference on Auditory Display ICAD2004 in Sydney from 6-9 July 2004 www.icad.org/icad2004. MotivationIn his acceptance speech for the 1981 Nobel Prize for Medicine, David Hubel describes how the sound of a neuron firing led to his first important discovery. "Our first real discovery came as a surprise. We had been doing experiments for about a month . and were not getting very far. One day we made an especially stable recording. For 3 or 4 hours we got absolutely nowhere. Then we began to elicit some vague and inconsistent responses by stimulating somewhere in the mid-periphery of the retina. We were inserting the glass slide with its black spot into the slot of the ophthalmoscope when suddenly over the audiomonitor the cell went off like a machine gun. After some fussing and fiddling we found out what was happening. The response had nothing to do with the black dot. As the glass slide was inserted its edge was casting onto the retina a faint but sharp shadow, a straight dark line on a light background. That was what the cell wanted, and it wanted it, moreover, in just one narrow range of orientations." http://www.nobel.se/medicine/laureates/1981/ Listening to the Mind Listening is a development of the technique of listening to neurons, but we will extend it to explore the neural activity of the entire brain. The goals of the concert are to

ConstraintsThe concert is an investigation on the boundary of art and science. The sonifications need to be musically satisfying for a general audience, scientifically interesting to neuroscientists, and help develop design knowledge in the auditory display community. In order to open up artistic possibilities, whilst at the same time providing for comparison and analysis, we are imposing some simple constraints for the sonifications.

BackgroundThe human brain is made up of 100 billion neurons, each with thousands of connections with other neurons! However the brain is not homogenous - it is made up of many special purpose regions. Many of these regions are activated by sounds - starting from the cochlea, up the vestibulocochlear nerve, to the superior olive that processes directional cues, on to the pons for recognition and the thalamus that directs attention, as well as the primary and secondary auditory cortex that connect sounds with memories, emotions and thinking. Most techniques for observing neural activity are visual, but there is potential that sounds may provide alternative insights especially for temporal patterns such as the well-known alpha, beta, and gamma frequency bands. Below are some starting points for exploring sonification, neural activity, and human auditory processing.

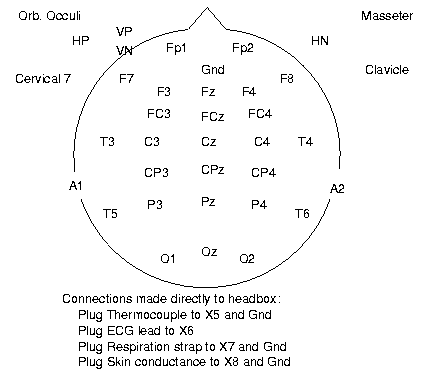

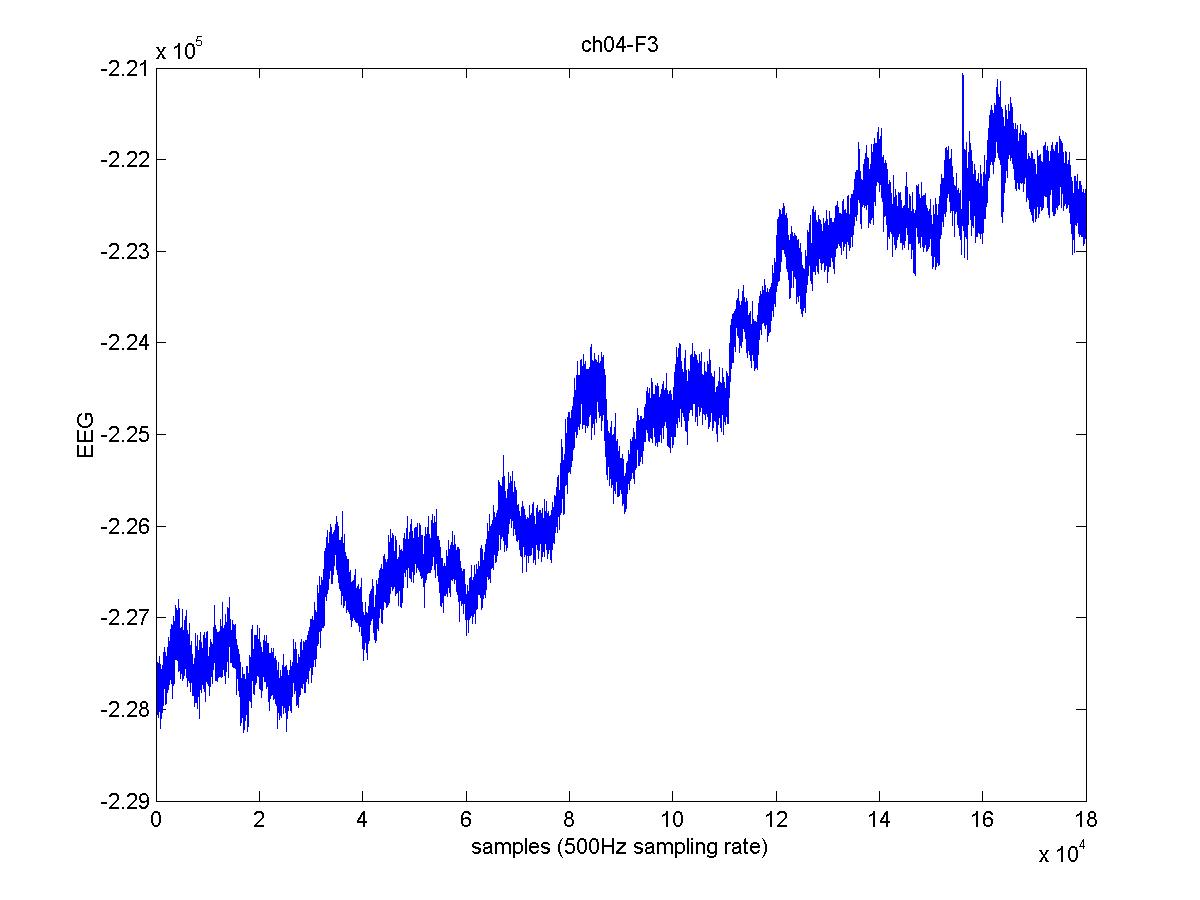

MusicThe listener in our experiment was listening to a piece of music by award winning indigenous Australian composer David Page. The piece is 5 minutes long and has a wide dynamic range with natural and synthesised sounds and instruments that is characteristic of David's blend of traditional and contemporary styles. The actual piece of music is being kept under wraps so that it does not influence the composers in their mappings from the neural data structure into sound. The mystery will be revealed at the finale of the concert, when after the ten sonifications have been played we will hear the original piece of music. David joined Bangarra Dance Theatre as resident composer and performer in 1991, collaborating on the music for Ninni, Praying Mantis Dreaming and the Atlanta Olympic Games flag handover ceremony in 1996, amongst other projects. He is particularly proud of his music for Ochres which was released as a CD through Larrikin records and won the 1995 Deadly Award for Best Soundtrack (National Indigenous Music, Sport, Entertainment and Community Awards). He went on to win that award for the next two years with Alchemy for the Australian Ballet in 1996, and Fish for Bangarra in 1997. In 2002 David received yet another Deadly, this time for Excellence in Theatrical Score. www.bangarra.com.au/bios/dpagesfrancis.html. DataThe listener wore headphones to hear the music, and a cap with EEG sensors on it to record neural activity. The 26 sensor electrodes were arranged according to the 10-20 standard for EEG placement. faculty.washington.edu/chudler/1020.html. The sensors are labelled by proximity over a regions of the brain (F=Front, T=Temporal, C=Central, P-Parietal, O=Occipital) followed by either a 'z' for the midline, or a number that increases as it moves further from the midline. Odd numbers (1,3,5) are on the left hemisphere and even numbers (2,4,6) on the right e.g. T4 is on the right temporal lobe, above the right ear. An additional 10 sensors were used to record heart-rate, skin conductance, eye movements, breathing and other data. The sensors were recorded as interleaved channels of signed 32 bit integers at a rate of 500 samples per second. The channels were separated into individually named files and converted to ascii format for simplicity of loading on different systems. The data was recorded at the Brain Resource Company www.brainresource.com by Evian Gordon, Daniel Hermens, and Patrick Hopkinson, in collaboration with Stephen Barrass, on 21 November 2003.

Download the zipped data in ascii signed 32 bit integer format < ~10 MB > from

Download zipped data plots in jpg format < ~2 MB > from

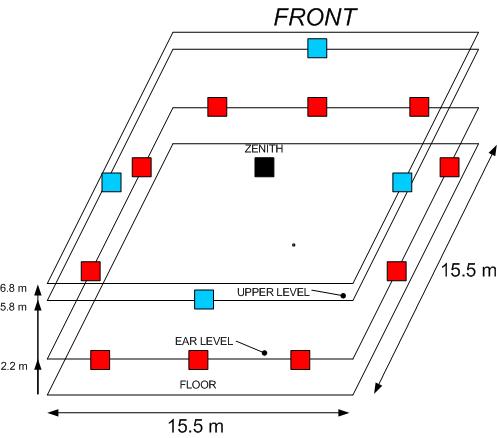

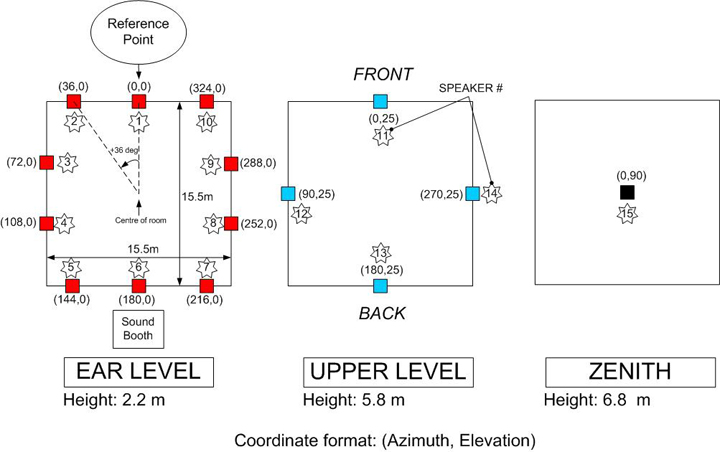

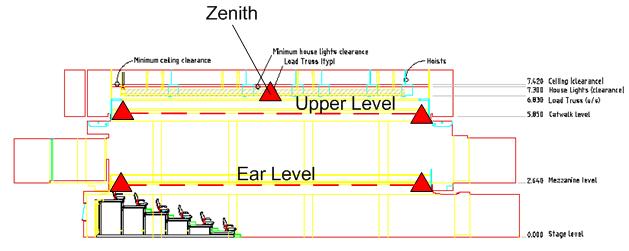

The Opera House Studio and Sound SystemThe Sydney Opera House Studio is an intimate, flexible space designed primarily for new music and contemporary performance. The seating capacity ranges from 220 to 318, depending on the configuration. The floor area is approximately 15m x 15m, within which flexible tiered seating banks and cabaret-style seating may be arranged. There are two rows of fixed seating on each of the four sides of the gallery. There is a powered overhead grid for hanging speakers with cabling points that connect to a 32 channel mixing console. Layout plans and technical specifications of the Studio are available from www.sydneyoperahouse.com/h/at_venues_fs2.html. The speaker array consists of 10 speakers placed at ear level, 4 speakers placed at 5.8 m above floor and one zenith speaker placed at 6.8m above the centre of the room, as shown below.

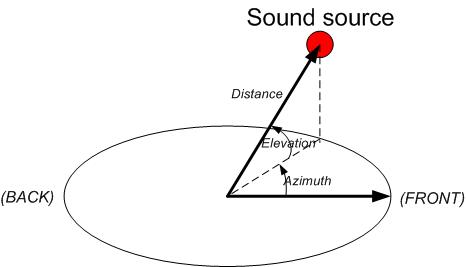

Here are the polar coordinates for each speaker from the centre of the room: And here are the speaker layers (ear level, upper level and zenith): SubmissionsSubmissions need to be received by 6 April 2004 to allow for review and selection. Submissions are open to everyone, and will be reviewed by an international panel. The panel will select ten pieces for the concert, audio CD and booklet. Submissions should consist of a description document and accompanying soundfiles. The description document should have a name made up from the surnames of the contributors, e.g. SmithBrownJones.pdf. The document should be in PDF format laid out according to the template at www.icad.org/icad2004/submission/. The document can be up to 4 pages long and must include the title of the piece, names and affiliations of contributors, a description of the mapping used to sonify the data, and a list of accompanying soundfiles. Formats Here are the different possible approaches for submitting your sonification:

In all cases, the soundfiles must be individual 16 bit PCM mono .wav format at 44.1 kHz. The soundfiles should have the same name as the description document with an additional unique ID in the range 01-36 for each e.g. SmithBrownJones01.wav, SmithBrownJones02.wav, . SmithBrownJones16.wav. The Lake Huron system will be used to mix the Soundfiles to a binaural form so that the selection panel can review the pieces through headphones. Further enquiries can be emailed to conference@icad.org with the subject line

For discussions please email the ICAD list at icad@santafe.edu. Electronic submissions can be uploaded by ftp to concert.ict.csiro.au CD-ROM submissions can be sent by post to: Stephen Barrass FTP Note You do not need to link your soundfiles into the description document, just make sure to use the naming convention. It is simplest if you upload your files in a single zipped archive file. You can upload your Listeniong to the Mind Listening submission by anonymous ftp to concert.ict.csiro.au Please use your email address as the password. The ftp site is not readable so you will not be able to see yours, or anyone elses, submissions that have been uploaded. |

Sponsors |

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||